Categories

Why premature realism collapses the option space — and how AI changes the economics of urban simulation.

01 | The Framework

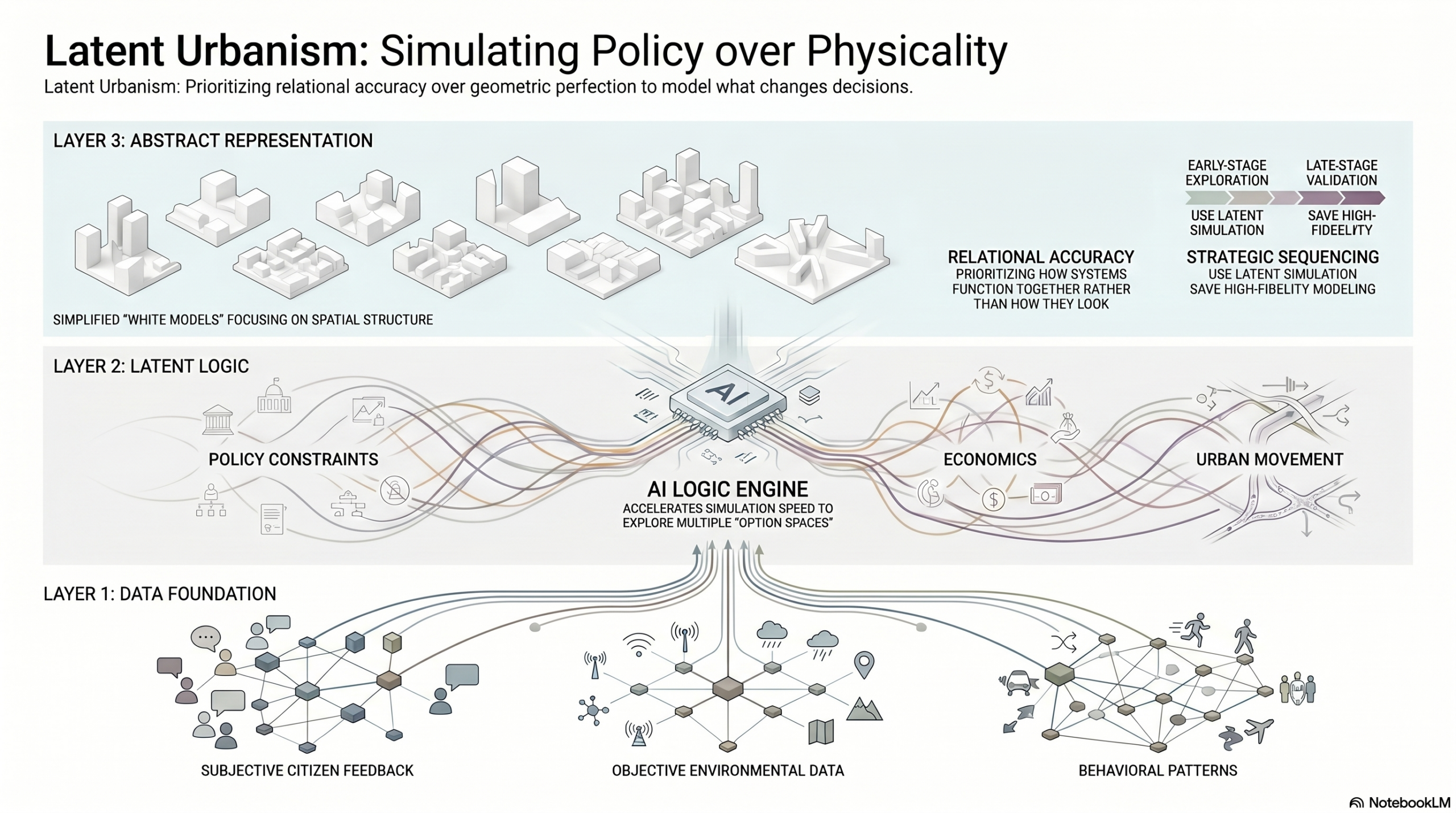

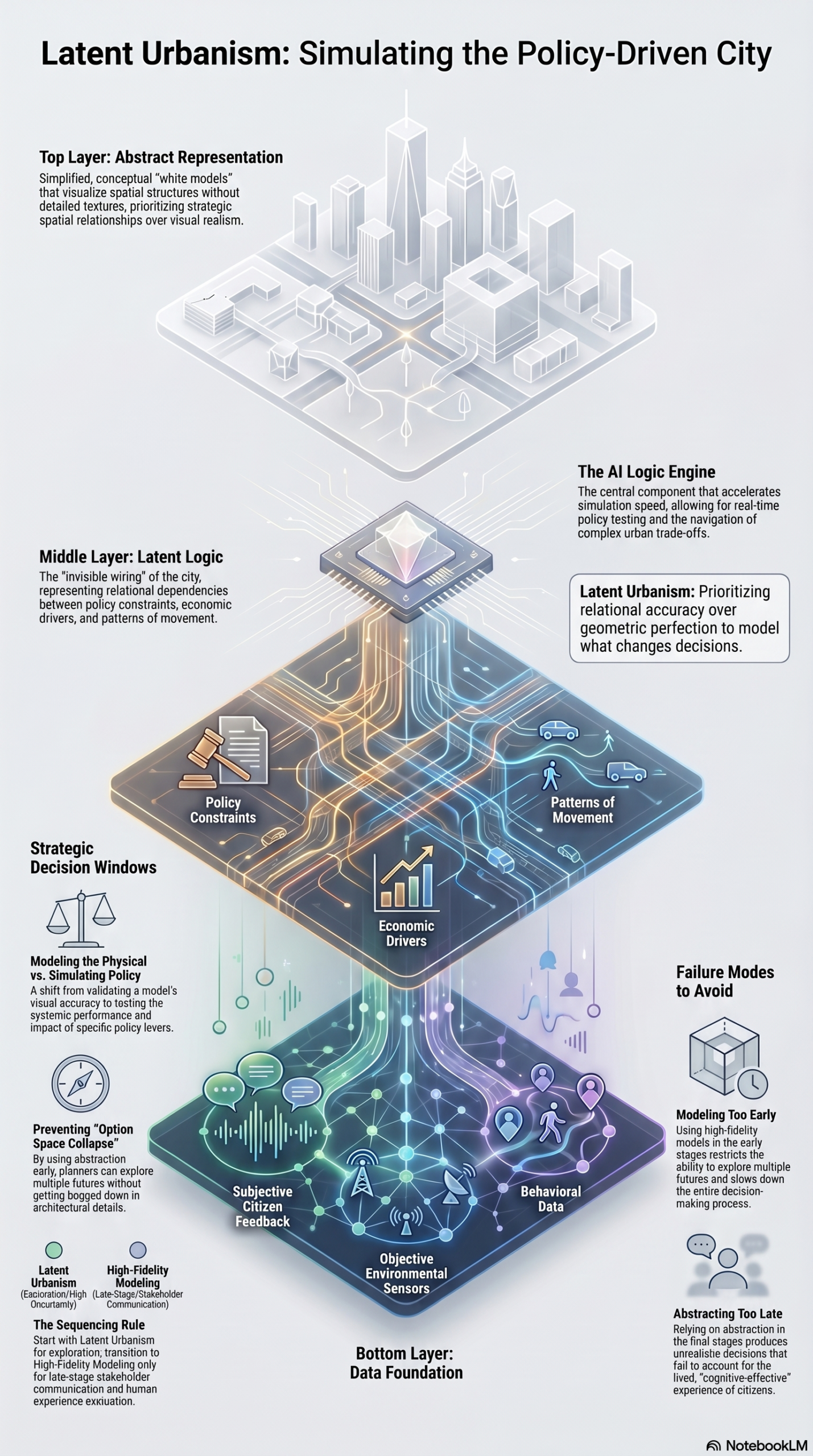

Do not model everything. Model what changes decisions. Geometric resolution is a communication tool, not an analytical one. Conflating the two is how the model becomes the project.

This blog tracks a sequence. Each post is a module in a larger argument about how spatial decisions get made and how AI is changing the conditions under which they’re made well or badly.

The previous post introduced the option space: the argument that the structural failure in urban decision-making is not choosing wrong, but closing down alternatives too early. This one moves a step further. If the Option Space is what we are trying to protect, Latent Urbanism is the operational logic that makes protecting it possible. And AI is what makes that logic run fast enough to matter.

If the last post was about the necessity of having options, this one is about the economy of representation we need to keep those options from collapsing under their own weight.

02 | Case Study

Singapore’s Virtual Singapore — a model that arrived too early

Virtual Singapore is one of the most cited urban digital twin projects in existence. Launched in 2014 and completed around 2018, it covers the entire city-state at high semantic and geometric fidelity – buildings, terrain, vegetation, underground infrastructure. By technical measure, it is impressive work.

Virtual Singapore is also a good illustration of a structural tendency that follows high-fidelity platforms: the more complete and detailed the model, the more it gravitates toward siloed, end-state use rather than iterative, early-stage decision support. Each agency uses a fragment for its specific purpose. The unified decision environment the platform was designed to enable is a harder problem than the model itself – because it requires workflows that are fast, revisable, and cross-functional, and those demands sit in tension with what a comprehensive high-fidelity model is optimised to do.

Virtual Singapore: whole-city 3D model at semantic detail

A complete city 3D model with high geometric and semantic fidelity, covering buildings, terrain, vegetation, and underground infrastructure. Intended as a unified decision-support environment across government agencies.

Virtual Singapore demonstrates a pattern that appears consistently across the Digital Twin literature: the model was built for the most demanding end-state use case first. The result is a system too heavy for the iterative, early-stage decisions that would benefit most from a simulation environment. High fidelity arrived before the workflows that could use it.

This is not a critique of the project. It is a demonstration of a sequencing problem. The research literature shows the same pattern at scale: of 84 Urban Digital Twin studies reviewed in a recent systematic analysis, 69 defaulted to high-fidelity, realistic models. The technology-centric narrative dominates. Most platforms are optimised for the final output rather than for the decisions that precede it.

The question is not what the model can represent — it is what decision the model is being built to support, and at what stage of the process that decision actually happens.

03 | The Resolution Layer

We’ve spent too many hours watching software crawl while trying to navigate a model that is far too detailed for its current stage. There is a specific kind of exhaustion that comes from polishing textures on a concept that hasn’t even settled on its basic logic.

The research confirms what practice already knows.

Digital Twin studies have surged since 2020, but the vast majority still default to high-fidelity, realistic models, a technology-centric approach that conflates resolution with rigour. The result is systems that are too slow for the decisions they are supposed to support. When you spend your iteration cycles managing geometric complexity, you don’t have them left for the question that actually matters: which future are we choosing?

This is what our Block Decision Architecture (BDA), a framework that we’ve been lately developing, calls the “Realism Trap”. Over-modelling doesn’t produce better decisions. It produces decision paralysis — the kind where the model becomes the project.

The correction is not about lowering standards. It is about understanding what kind of accuracy the current stage of work actually needs.

Modelling the city means capturing physical form — geometry, texture, spatial relationships, material conditions. Simulating the city means something different: it means representing how policy levers interact, how movement patterns respond to intervention, how variables propagate through a system. The two are not the same operation.

The rule that follows is precise: do not model everything. Model what changes decisions.

A simulation that captures the relational logic between density, transit access, and energy demand, even at low geometric resolution, is more useful for early-stage planning than a photorealistic model of a block that cannot be modified in under four hours. Relational accuracy is the variable that matters. Visual accuracy is a later problem.

This is where AI enters, not as an image generator, but as the logic engine that makes relational simulation viable at city scale. The assumption that high-fidelity data and simulation speed are in fundamental tension, is being overturned. AI-assisted workflows allow planners to evaluate building performance, solar exposure, and dozens of spatial metrics in minutes rather than days. The iteration cycle that used to be the bottleneck becomes the competitive advantage. What changes is not the amount of data being handled, it is the speed at which conclusions can be drawn from it and revised.

The sequencing rule that emerges from this is the Resolution Layer. For early-stage planning and city-scale policy testing, the correct tool is abstraction, Latent Urbanism. Low geometric fidelity, high relational intelligence. The model runs fast, parameters can be modified, trade-offs remain visible. You keep the option space open precisely because the representation doesn’t freeze it.

Realism enters later, at the validation stage, and for stakeholder communication, where human perception and cognitive-affective response become relevant. At that point, the strategic decisions have already been made. The high-fidelity model is no longer driving the logic. It is communicating it. That is a very different function, and it deserves a different tool.

The goal is to stop treating the final output as a static image and start treating it as a functioning system. Our value as practitioners isn’t in the number of polygons we can generate, it’s in the clarity of the trade-offs we can visualise. The architecture gets more focused when we stop confusing visual detail with strategic depth.

04 | Tools

Two workflows for relational simulation — without the geometric overhead

This section reviews tools and workflows specifically framed for spatial intelligence work. This month: two approaches that operationalise the Resolution Layer logic at different scales.

Geospatial AI agents for early-stage land-use analysis

AI agents applied to geospatial datasets — parcel data, mobility flows, environmental sensors, can automate asset identification and run relational simulations across dozens of variables simultaneously. Policy scenario testing at block and district scale becomes a day-one activity rather than a late-stage one.

Multi-metric building performance evaluation in early design

AI-assisted tools that fuse solar analysis, energy modelling, and programme efficiency metrics into a single early-design environment. Demonstrated potential: dozens of design options evaluated in minutes at schematic stage, with meaningful performance data available before geometric commitment.

What both approaches share is a structural commitment to the Resolution Layer logic: generate decision-quality information before investing in visual resolution. The tools are different. The sequencing principle is the same.

05 | The Open Question

When does abstraction become irresponsible?

The Resolution Layer argument rests on a sequencing logic: use abstraction early, realism late. But this assumes that the transition point is legible, that practitioners know when a decision has been sufficiently stress-tested at the relational level before committing to geometric representation. In practice, that threshold is rarely explicit. Latent Urbanism runs the risk of keeping decisions comfortable and fast-moving precisely when they should encounter the friction of material reality.

The question we haven’t fully resolved: is there a class of spatial decisions – those involving heritage, microclimate, or social density – where geometric fidelity is not just a communication tool but an analytical one? Where the polygon count is not decorative but epistemically necessary?

This is the frontier the BDA research hasn’t settled. If you’ve encountered this threshold in your own work – where the abstract model failed to surface something the detailed model would have caught – we’d like to know about it.

Comments are closed.